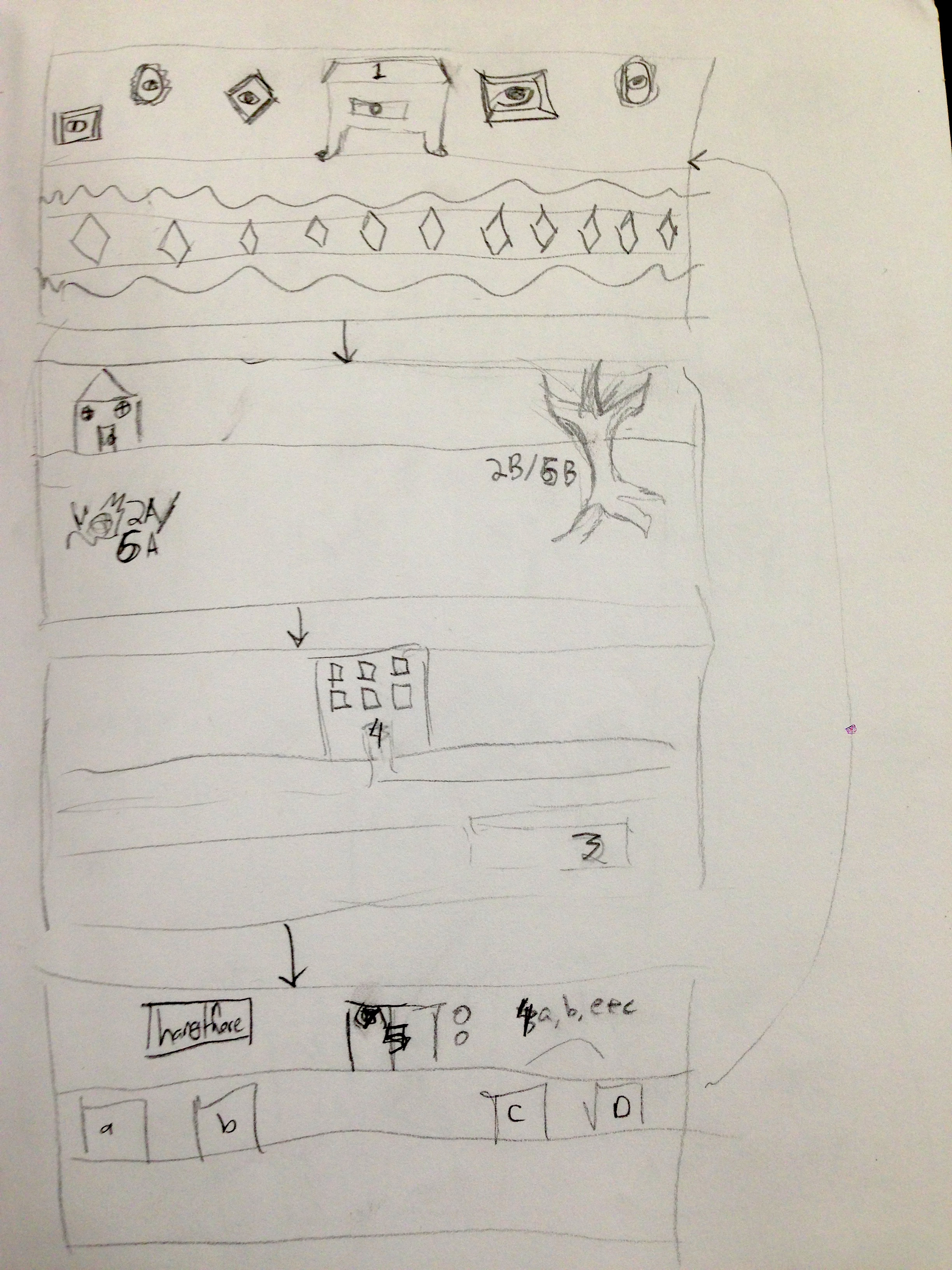

This project went through many iterations before it took the form that I presented at open studios on Tuesday. I knew I wanted to experiment with sound and the placement of sound in space, and I knew I wanted to create a space of interaction that felt separated somehow from the world around it. I began conceiving of the piece as different recordings of different text playing from different places in a room, which you would walk through. However, after experimenting with motion sensors, I knew I wanted to incorporate them somehow. Initially, I thought that the control would be limited to the sound following the viewer as they walked past the exhibit, but the combination of my own desire to create a more insular space and the limitations of the technology pushed me to create a piece that would utilize hand-control. I settled on a theramin-esque setup, where the movement of the user’s hand would change the placement of the sound in the room and the volume of the sound.

Once I had formulated the way I wanted to shape the piece, the next big challenge for me was tone. I coded the motion sensor script, and then set out to create the music that would be playing for users to control. I had initially envisioned something more ambient and dark, but as I drafted song after song and tried to think about what would be fun and engaging to control, I realized that a more peaceful and cheerful tone would serve the piece better. The process of creating the final song was incredibly rewarding, and ultimately I’m happier with it than I was with any of my more melancholy drafts.

The next challenge was visual. I struggled a lot with providing visual cues of how to interact with the piece, and how to visually reward the user for interacting on top of the sonic element. I set up a projector with lights changing color to add to the tone, and beta-testers of the piece enjoyed using the projector’s light to make shadows as they controlled the music; so I moved the motion-sensor into a position that would make this more intuitive, and framed the projector screen with diagrams of different shadow puppets. In a later test, this was semi-successful, as users immediately knew how to interact with the projector; but the effect that the motion sensor was having wasn’t as clear. In my final draft, I added a visual portion of code, which allows the motion sensor to change the color on the screen as well as the sound.

This final version debuted at Open Studios, and the addition of the visual solved a lot of problems and moved the piece along quite a bit. People were more clear on how to interact with the piece and how they were affecting it. This extra technical element posed its own problems: the size and placement of the Leap made the area of interaction smaller than people expected it to be, and the fact that I had only programmed for one hand input at a time meant that sometimes the program froze or or didn’t respond when there was more than one hand in the area of the Leap.

Overall the project was very successful. People enjoyed interacting with it and found it intuitive and evocative, particularly commenting on the relaxed yet playful nature of the piece. If I were to rework this piece in the future, I have some ideas about reworks and fine-tuning, but overall I’m very satisfied with the end result.