Categories

Blackspace

Blackspace: Astrophobia

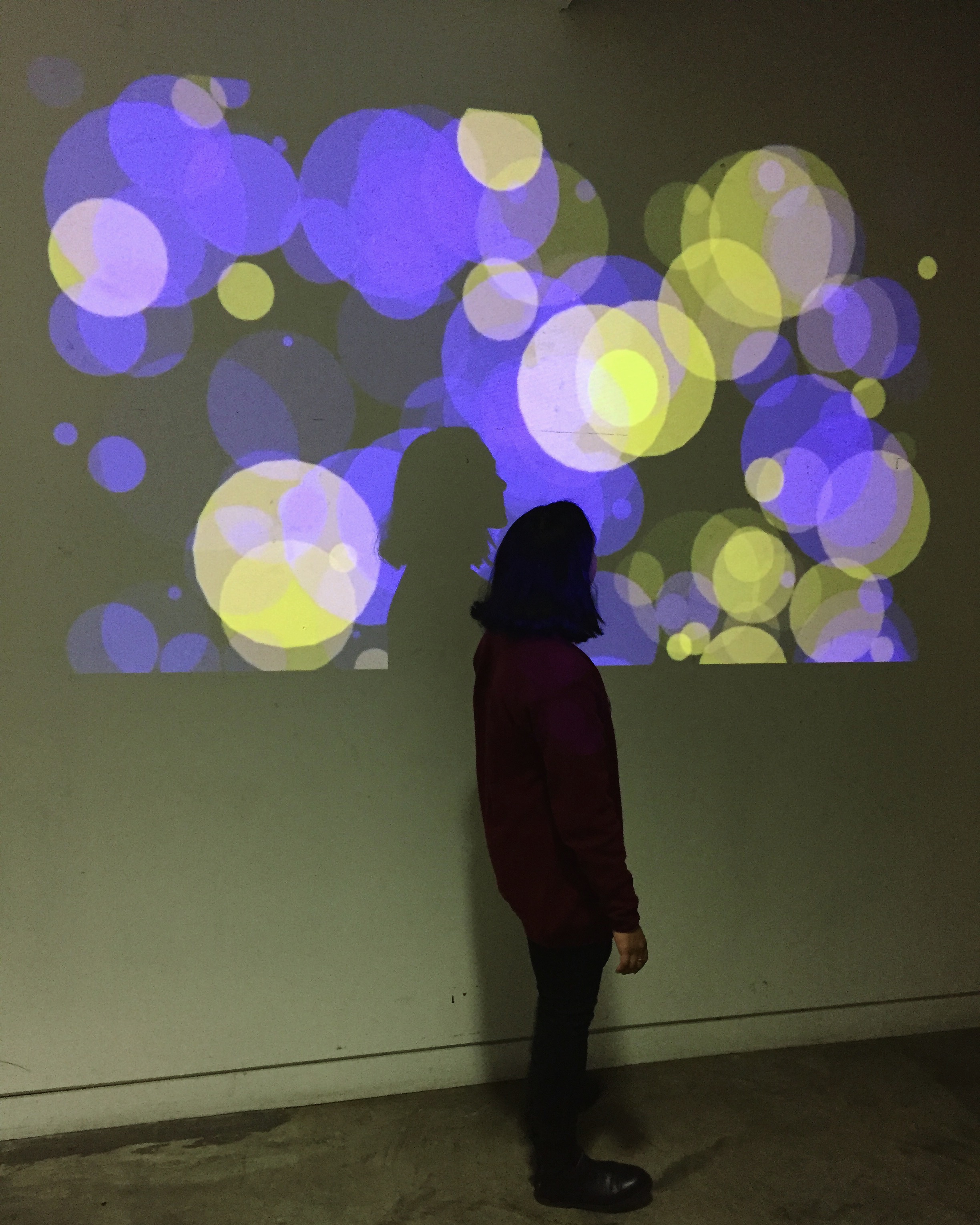

Blackspace was modeled after our darkness theme, which prompted me to change my project. It was more or less the same – polygons bouncing off the sides of the sketch along to track by Fort Romeau. However, in one of our open…

Blackspace: lttl mtch grl

I re-performed my analog system from earlier in the semester, lttl mtch grl. The system had view to no revision with it. The only major change was adding matches to be lit in order to keep track of time. The…

Blackspace: Urban Obstruction

The Black Space projects are systems that explore the constraints of darkness. My project plays on the idea of urban obstruction and access to public spaces. The projected video presents the reality of fenced open areas on the New York…

Blackspace: Text + Movement

This was a text, video, and sound piece that uses the ArrayList function to call up individual words from a song’s lyrics at random as the song plays, creating a counterpoint to the song, the intended meaning of the…

Blackspace: Point Cloud

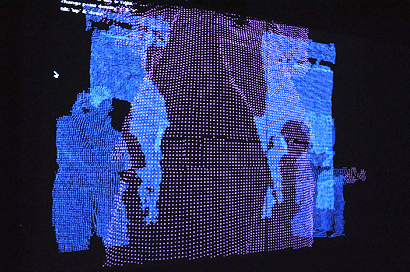

The idea of working in complete darkness was exciting, but I had a hard time coming up with a system that would successfully translate to that situation. When I began working with the Kinect for my conference project, I realized…