My system glitches live stream video from the computer’s web cam. The project was a direct response to a glitch code developed in class that altered pixels of a given image. Since then I was determined to create a similar effect that could interact with the environment by means of video. Due to my interest in urban design and architecture I saw my system as a potential for sparking interaction in the built environment.

I consider this project a breakthrough my understanding and experience with systems. The desired outcome was a result of a complete randomness. Since i didn’t know how to achieve the goal of creating a video glitch program, I kept pasting and deleting code from my processing windows. At some point of the journey in loosing control, the system surprised me and presented itself with a result.

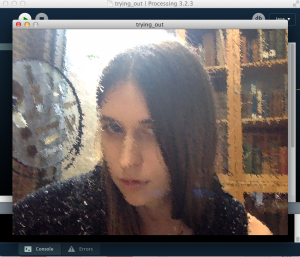

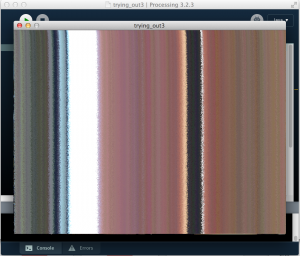

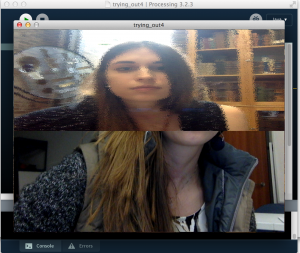

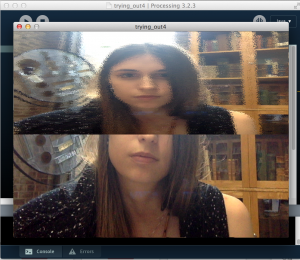

I created several versions of the system, altering the values of pixel modification in the for loop. As a result, the first system gently mutates the pixels, creating a sort of pulsating grain, as presented in the screen shots. The second version is abstract and multiples the colors of the web cam input and translates it into constantly moving lines. Each line is a response and evolution of the environment. The system evolves this way endlessly. Last version of the system is the most surprising since it builds on the input from the last running of the program before closing. The lower part of the image is the capture of the previous run while the top is similar to the first version of the system.

My system demonstrates a list of conditions developed during class. It embodies a set of relationship between the live-stream image and the output of the program. Is a process of constant motion. It is also self-evolving or self-adapting since it makes autonomous decisions and builds on the input to present unexpected results. The system has rules and boundaries defined by the processing code. It exists indecently from the observer and if not stopped, can go on infinitely.