Claim 1: If I communicate to other people through a sound visualizer and text-to-speech library, then it will feel equivalent to or easier than talking to people face-to-face. (Hint: this is false.)

Claim 2: If an installation setup meets the correct criteria, then it is quite simple to deceive an audience regarding the legibility of artificial intelligence. (Hint: this is surprisingly true.)

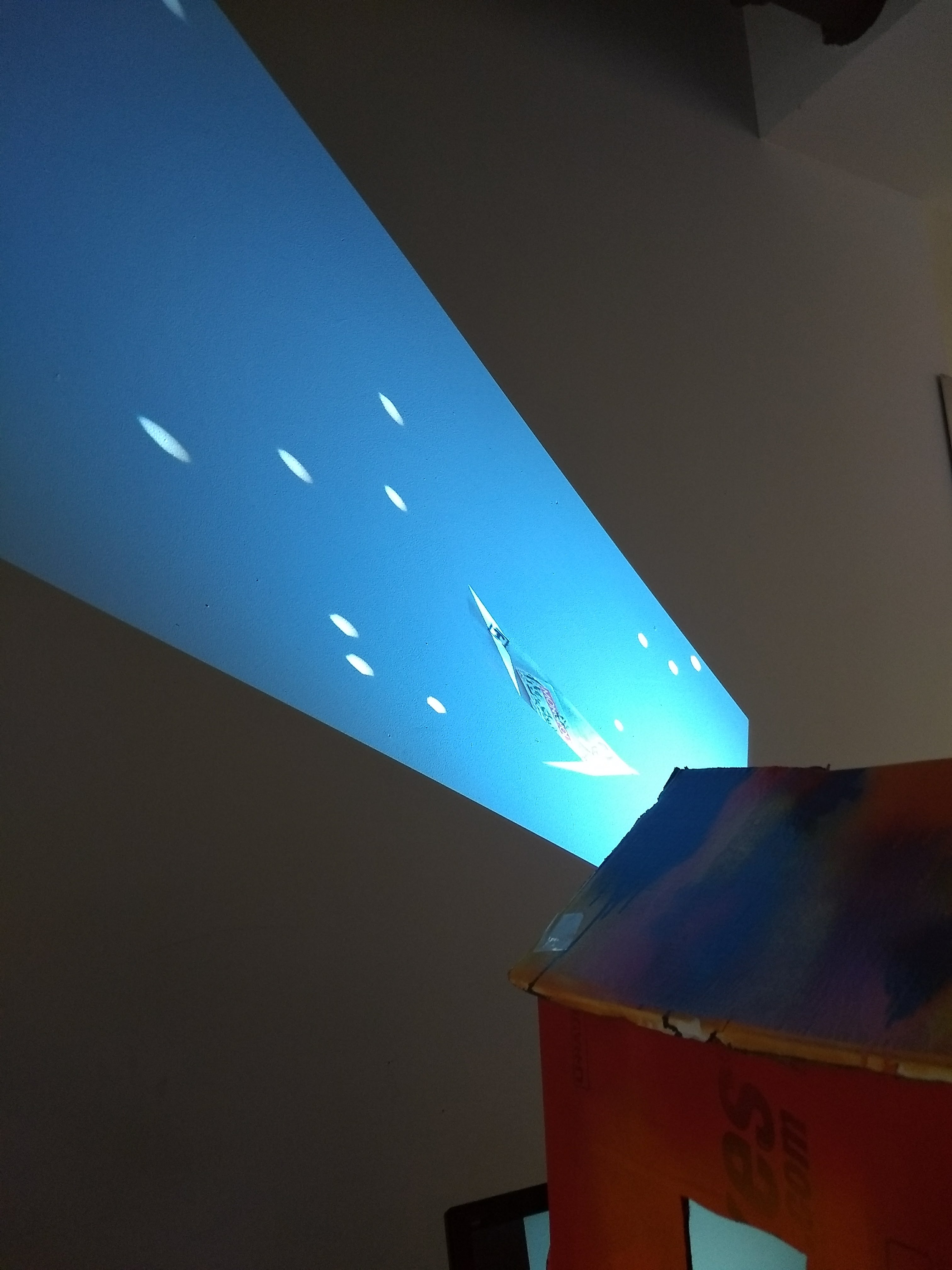

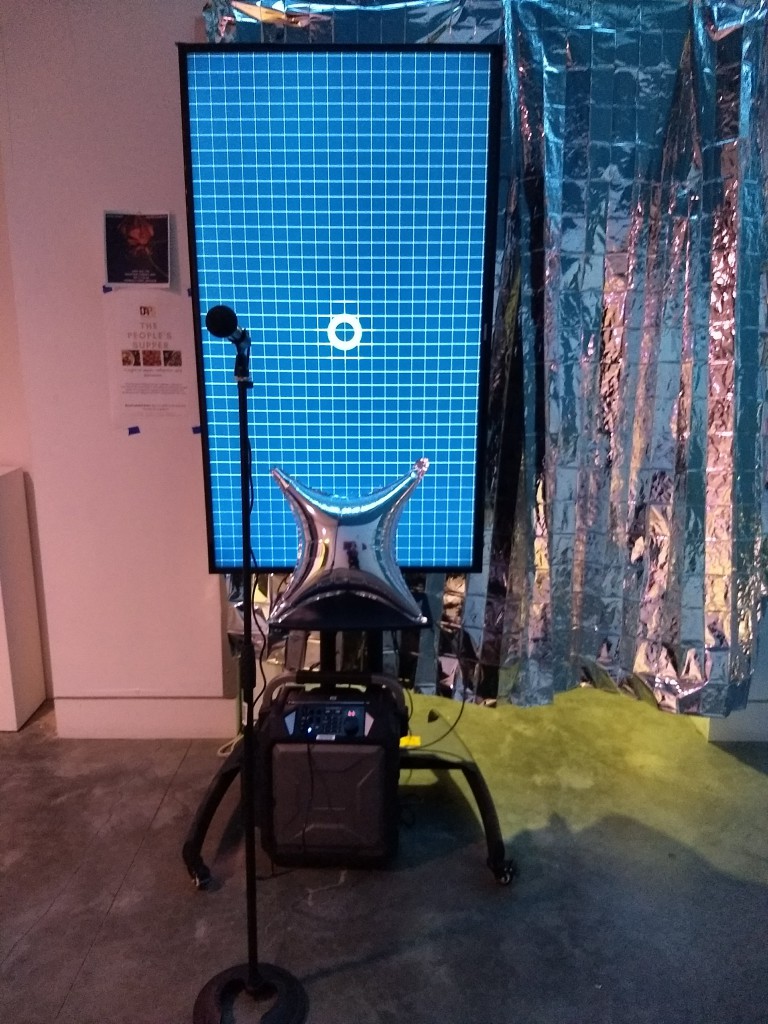

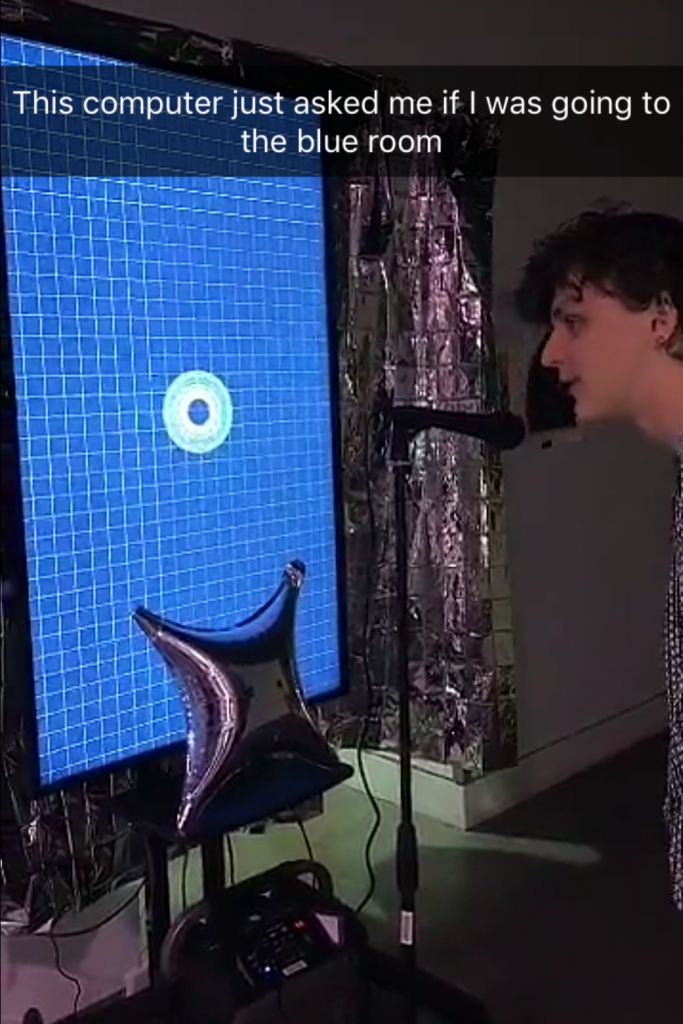

For the Supernova Art Party 2018, I created an installation revolving around a pseudo-artifical intelligence program. I set up a large monitor with a microphone and speaker in front to encourage the audience to “speak” to the monitor. I connected my laptop to the monitor via HDMI cable and hid in a curtained area behind the monitor. The program I created and ran was a simple audio visualizer, where the central circle changed diameter depending on the volume of the surrounding area. There were also some extra visual effects (such as the circle gradually changing color, a fade-out effect on the circles of different diameter, and a grid background), as well as a text-to-speech feature. This is where the magic happened: I had a small text input box hidden at the bottom of the screen (which, due to resolution differences, coincidentally didn’t show up on the monitor), where I could enter text and upon pressing the “return” key, have a text-to-speech library read the text I typed. Thus, I could listen to the user’s questions to my program and type a response from my computer, giving the user the illusion that my program listens and can respond without any outside help.

I must admit, as a moderately shy person with absolutely no showmanship experience, I found my idea of hiding behind a curtain for the party as part of my project pretty genius. I knew, however, I still had to tackle the issue of being hidden, but still aware of the interaction with my piece. This was, overall, still the hardest part of my installation. I found it difficult to balance between interacting with people curious in my installation versus people trying to get from point A to point B and not interested in it, especially when I couldn’t see the people behind my Mylar curtain. The curtain seemed semi-transparent during daylight, but once it got darker it became much harder to see through and I found I couldn’t rely on it to see people. All I could rely on was their voices. This worked out somewhat, as people felt the need to treat it like any other talking device such as Google Home or Alexa and initiate the conversation. It still caused some anxiety, however, as I felt pressure to type and respond quickly, respond with wit, and do all this without seeing the other person (or sometimes, without hearing them quickly as some people’s speech into the mic was muffled). Thus, it was most definitely NOT easier to communicate with people via technology (in this case). In the future, I think placing the installation in a space where people go specifically to interact with it, versus a walking space, and making sure I could truly see the audience would create some ease on my part.

Speaking of space, I believe I was able to use the parochial nature of the space that is Heimbold (and Sarah Lawrence) to my advantage. As everyone at the party was united by being members of Sarah Lawrence, I could make college-specific references that made my “AI”, Sam, feel surprisingly knowledgeable and surreal. I was worried this would break the Turing Test quality, but even with specific references, many people appeared to think this was a program built with true artificial intelligence. On top of all this, I think the knowledge of local culture made it easier to befriend (and even fall in love with) Sam.

This idea that I could make an AI interface built upon local knowledge is a unique and tempting one. What if, instead of having a mass production of general knowledge machines, we had specific localized AI that was built upon data within a small radius or specific community? But I digress. The specificity of this bot worked well at the Art Party, a culmination of the parochial localized culture we are a part of. While I wrestled with some aspects of my text-to-speech library, like Sam’s difficult-to-understand accent, I don’t think a general, clear-spoken Alexa would have been as fun or interesting.