Unfortunately I have been unable to get my project to work outside of Java; however, I did manage to create a synthesizer that I am actually happy with and think could be used to improvise or compose some electronic music.

In addition to tweaking the audio and game feel, one feature I implemented was color-changing particles. The particles change color depending on what note they are playing with the synthesizer. In addition to looking way better, it offers the user some visual feedback to the individual notes present in the sound they are generating.

I would like to implement some sort of fx engine that could take this game to the next level. I would love to be able to trigger delays by shaking the tablet, and watch them be visualized as duplicates of the particles scatter off into the distance. Alternatively, I could see a reverb working well here, with rings that spread out from the particles.

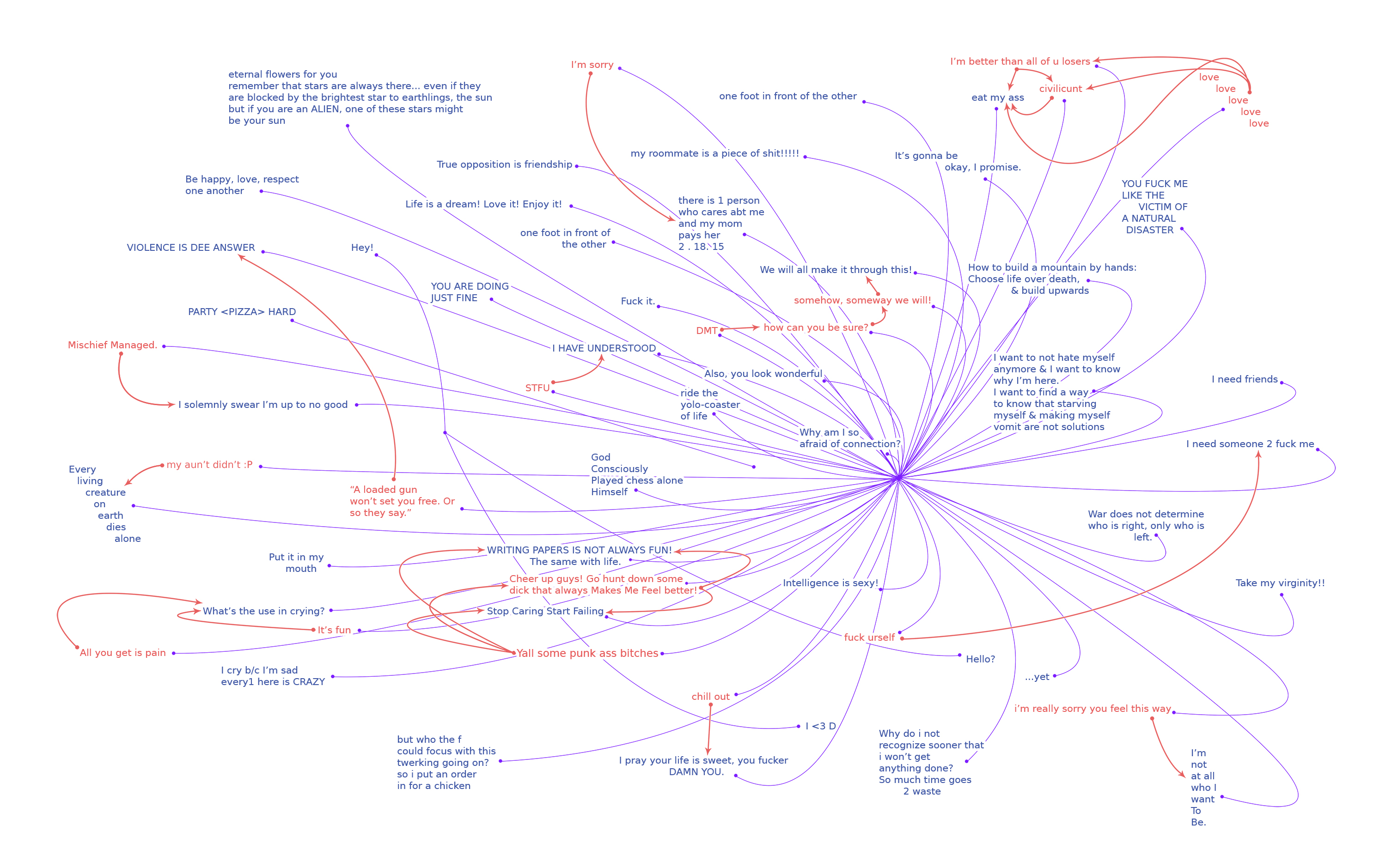

All in all, the project has inspired me to work more with code in my own artmaking. I am really interested in the ramifications of musical tools that double as visualizers. In the modern world, visuals are a big part of musical performance, and I wonder if it would be possible for artists of the future to work with realtime art and music in some sort of hybrid way. The experience would be uniquely different than the status quo. Perhaps we would lose something from pure music and something from pure art, but the hybrid could generate new visual and sonic combinations that are intimately intertwined and interesting to experience.

I am bummed out that I can’t get it working on android yet. On the bright side, the project has gotten me interested in learning more powerful languages and developing this concept out further. So that is what I will be looking towards next.