I decided to look into Beads for audio generation within Processing. From the early stages of prototyping my seeking particle system (shown below), I found that I craved some sort of sound-representation of the particle motion onscreen; rather than designing sound first and then adding visuals, I decided I would generate audio based on visuals. I had the initial idea that I could use Beads to generate individual sine waves (and a series of harmonics) for each particle onscreen, and map the frequency of the sine wave to be based on the position of the particle from left to right.

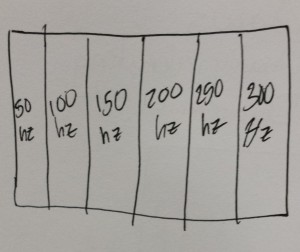

This ended up being less musical than I was hoping. Lots of different frequencies generated together sounds like wind or surf (and synthesizers use noise generators to accomplish the synthesis of these sounds). I was hoping for something a bit more musical, and I was hoping to be able to distinguish pure notes. I brainstormed a bit and then realized if I divided the screen up into sections, I could form a musical keyboard over the screen, and if I tweaked the harmonic relationships of the sections, I would be able to generate harmonies between the particles, even if they were far apart.

This ended up working better than I expected! I began at this point to tweak what I had in order to obtain a game feel I was happier with. I was also playtesting from the perspective of wanting it to be a viable instrument… An instrument you could play with and get lost in, or that someone might want to sample from.