Categories

Tag: conference

Diary Forms: 15 Years

Before returning to school for spring semester this year, I spent my time converting 15-year-old home movies to my computer. I knew that these videos would come in handy for this course since my favorite works of art focus on…

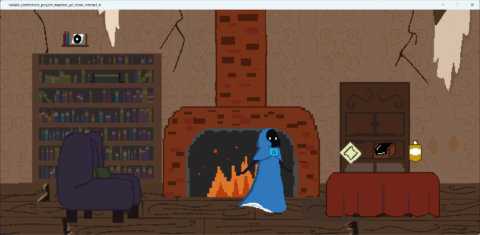

Art From Code: Hearth and Heart

The inspiration of this piece comes from a meme. It is a picture of a man who has put several tuba parts on various limbs, and is posing like a knight. This meme spawned various tracks which combined the Tuba,…

Drawing Machines: What Can We Find on a Night Walk in the Woods?

The culmination of my conference work for this class is a twelve-image series titled “What can we find on a night walk in the woods?” I explore curiosity, discovery, wonder, and the senses through the setting of a night walk…

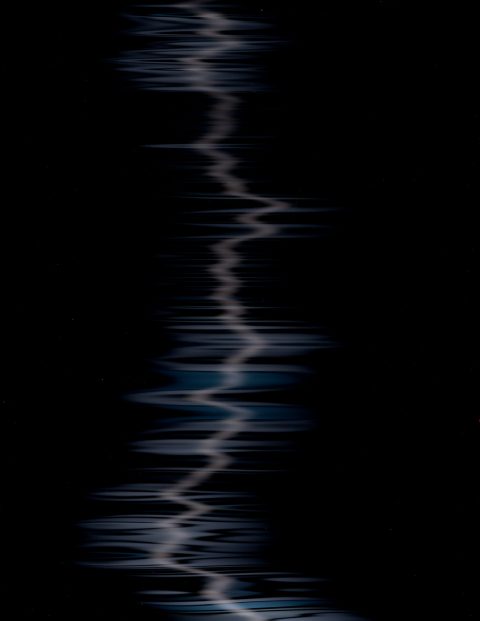

Systems Aesthetics: A Final System: Filters

My final conference project is called Filters. This was a long and hard journey to get here but I am very happy with the end result. I first found interest with the camera function and using the camera library to…

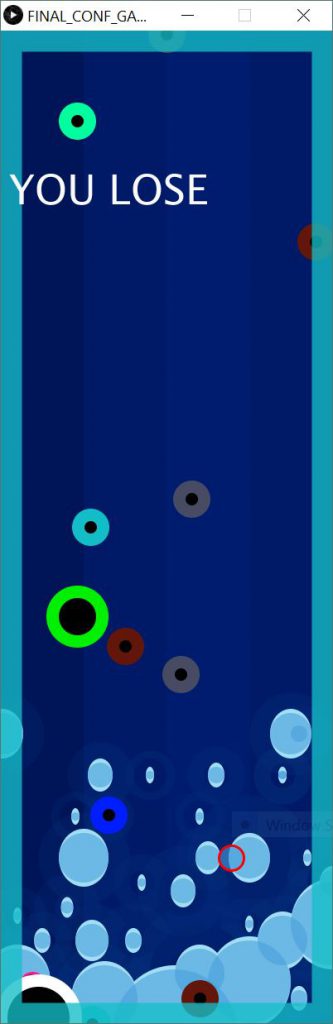

Systems Aesthetics: A Final System: Collision Game

For the first check-in of our systems class, I made a simple video game that goal was to get to a checkpoint while avoiding collisions with different obstacles, in the background, I put the png of a cyber- city sky….

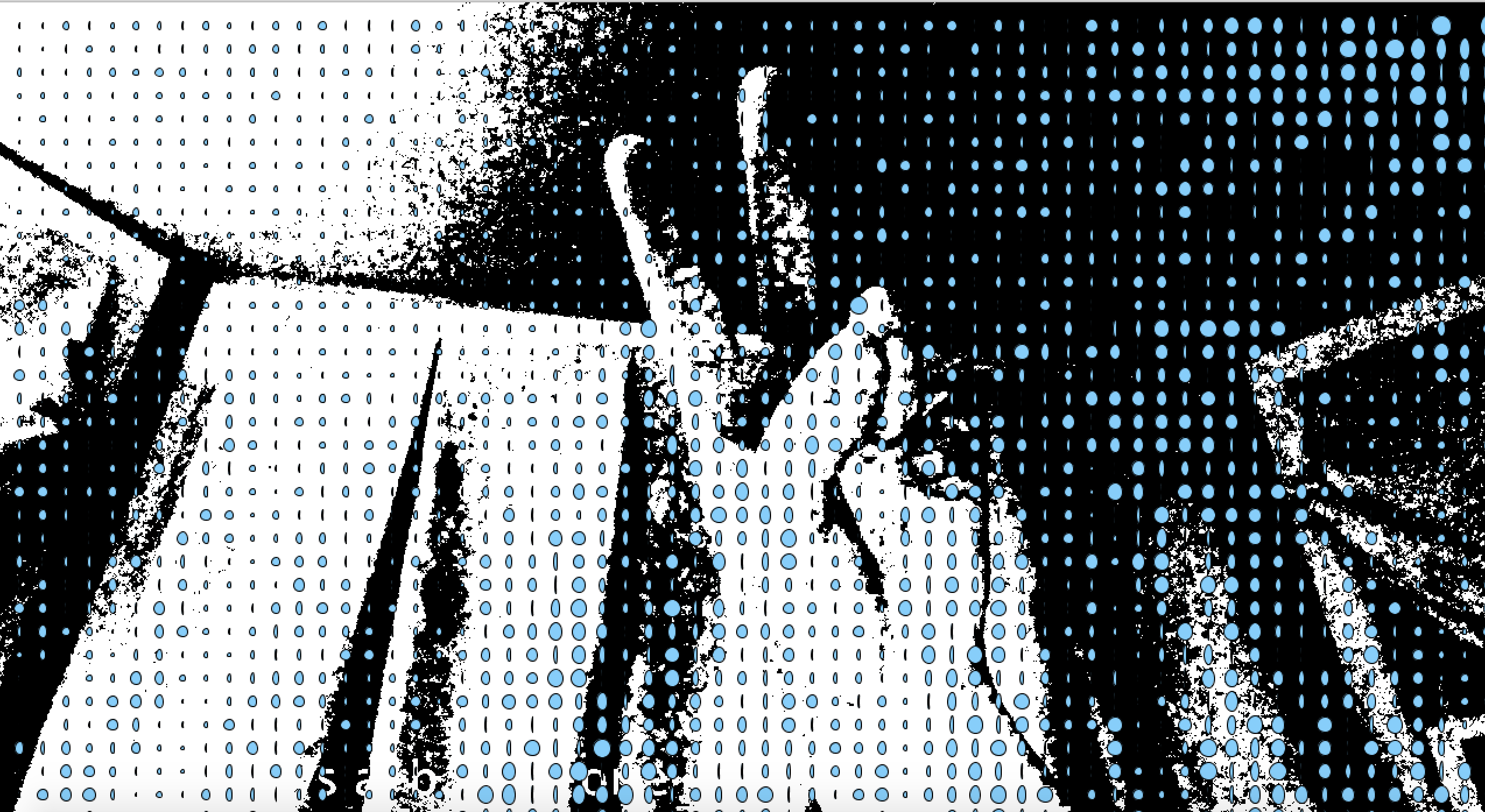

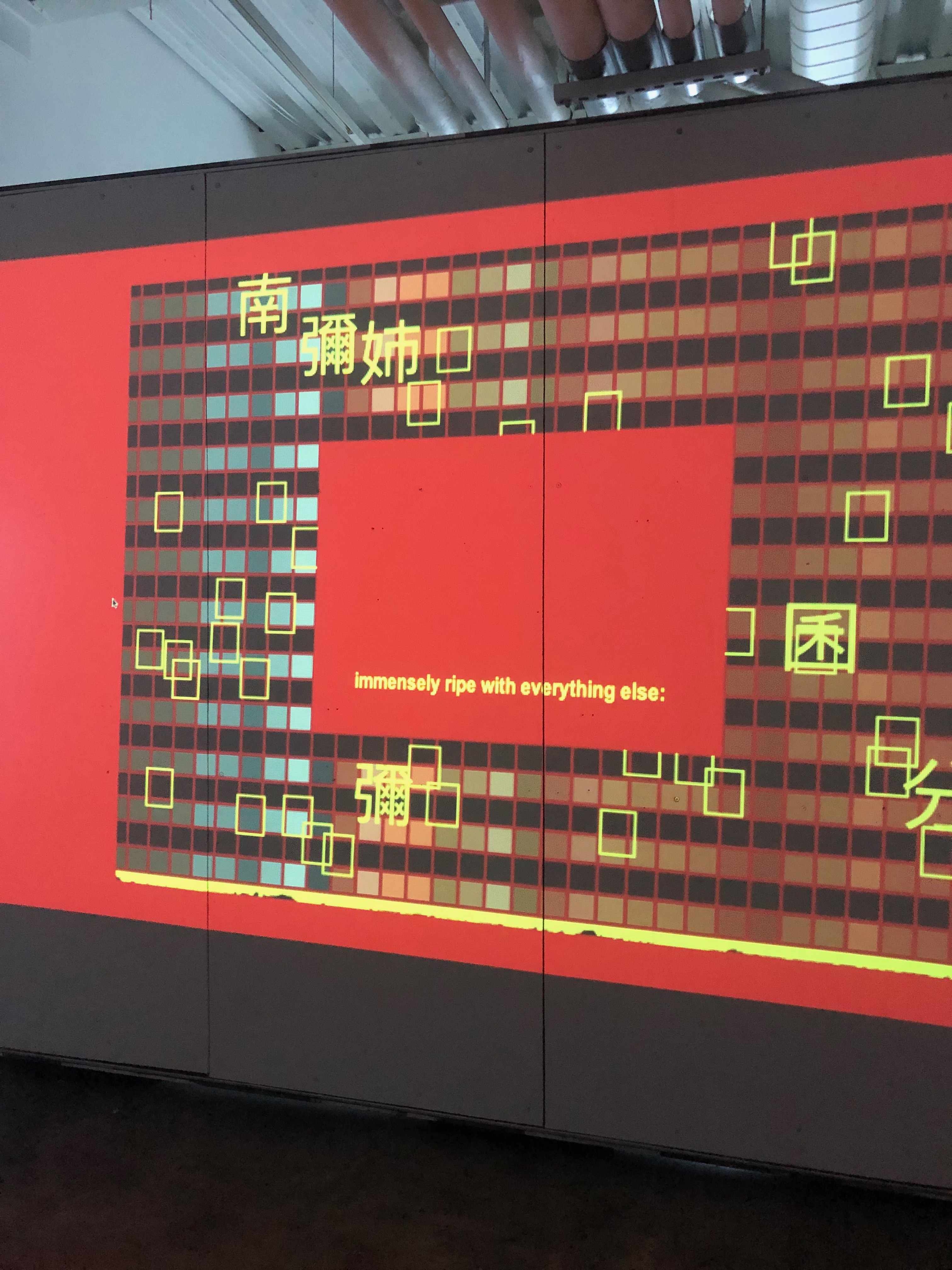

Systems Aesthetics: A Final System: Glitch It

For this final system conference project, I was inspired by the check-in assignment we did for the pixel sorting, the research we did on Rosa Menkman in class, and the reading we did on Found Systems As Glitch Culture by…

Systems Aesthetics: A Final System: mommy n me

For “Art From Code” last semester, my conference project explored software mirrors and live video feeds. I realized early on in the semester that this piece was already a sort of system: 1) it never became an object as much…

Systems Aesthetics: A Final System: Let-It-Fly

I conceived of this project when I was playing with children’s puzzle pieces. I wondered: ‘These puzzles are clearly a system. They take on similar visual forms that are lucidly defined, yet contain distinct information. The shapes compliment each other,…

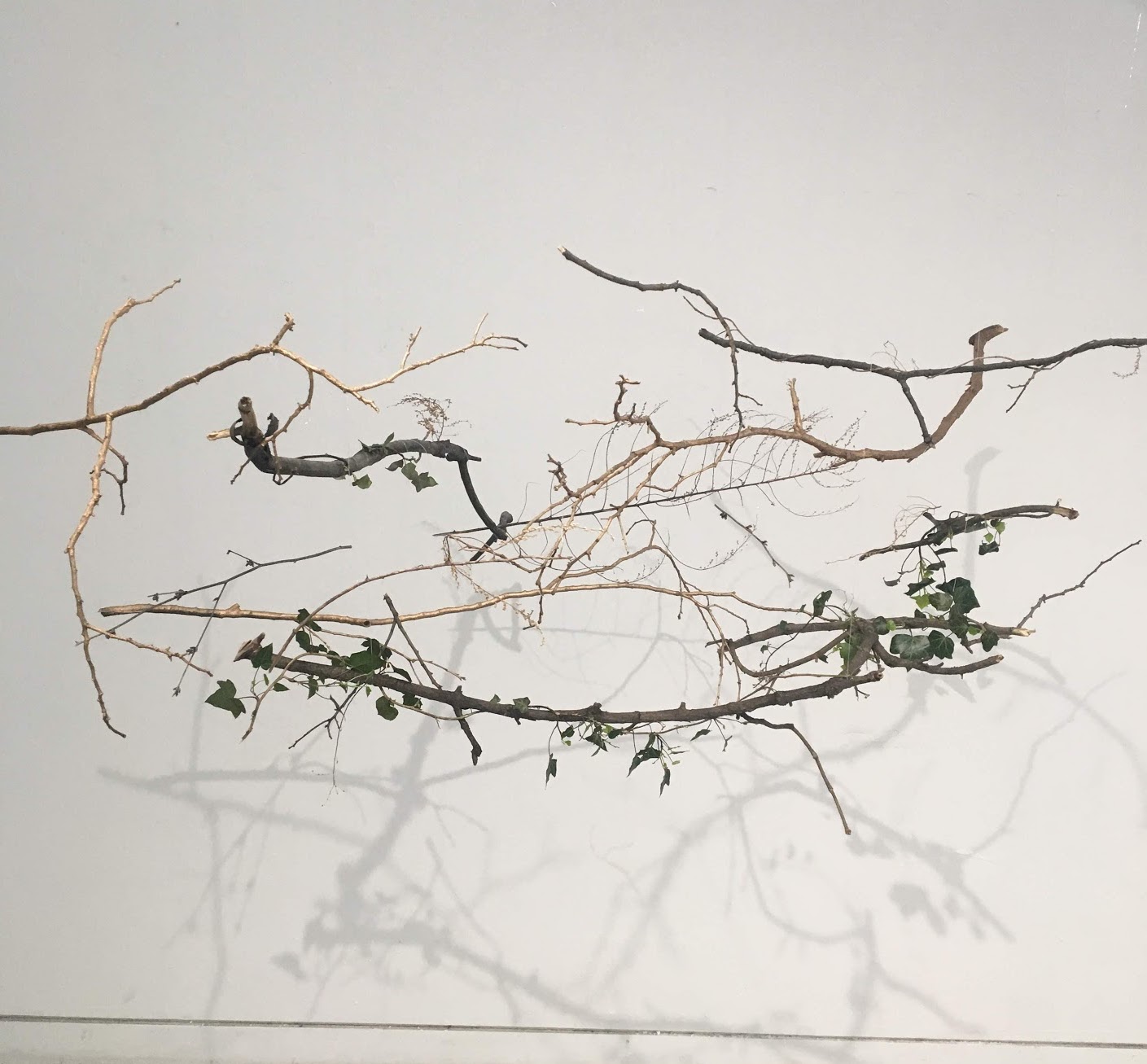

Drawing Machines: Exploded Nest

I am going to construct a hanging, nest-like installation made of found branches, sticks, ivy, and dried flowers. I will hang vine charcoal from the branches, using a pendulum-like motion to create a drawing on a piece of newsprint that…

Drawing Machines: Conference Project

The initial goal of my conference project was to apply Hans Haacke’s methodology to a drawing machine. His institutional critique and social critique seemed rather far removed from the relatively simple drawing machines that we had been creating thus far….

Drawing Machines: Spinning Wheel

For my conference project I will be making a spinning wheel. I was attracted to this idea after doing a bit of research and coming upon this machine that someone created, which then prompted me to want to recreate it….

Drawing Machine: Scratch

My conference work for this semester is a coded drawing machine. The mouse works as an eraser, which makes the black cover transparent and expose the colorful bottom plate. The uncovered parts is what viewers draw. The bottom plate is…

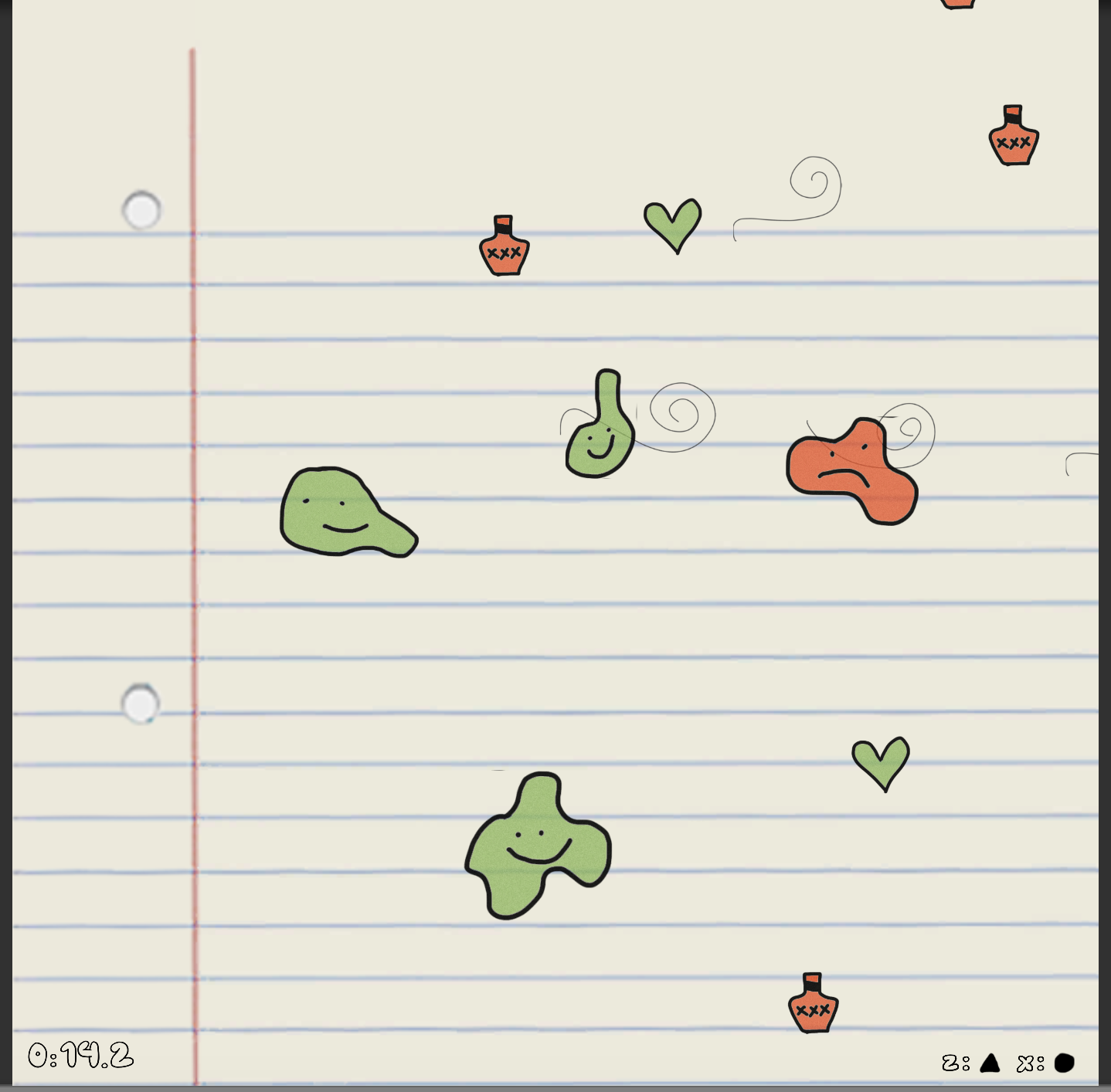

Level Design: In Loving Memory

(content warning: grief) The game I built for my conference work is a little bit different from the other games I’ve built in the past year. The basic world metaphor for my game comes from my experience with grief within…

Level Design: Safety Mania

Starting on the conference portion of the game, I knew that I wanted to work on the mechanics of this game, mostly refining the ones that existed. I also wanted to work on the look of the game because, while…

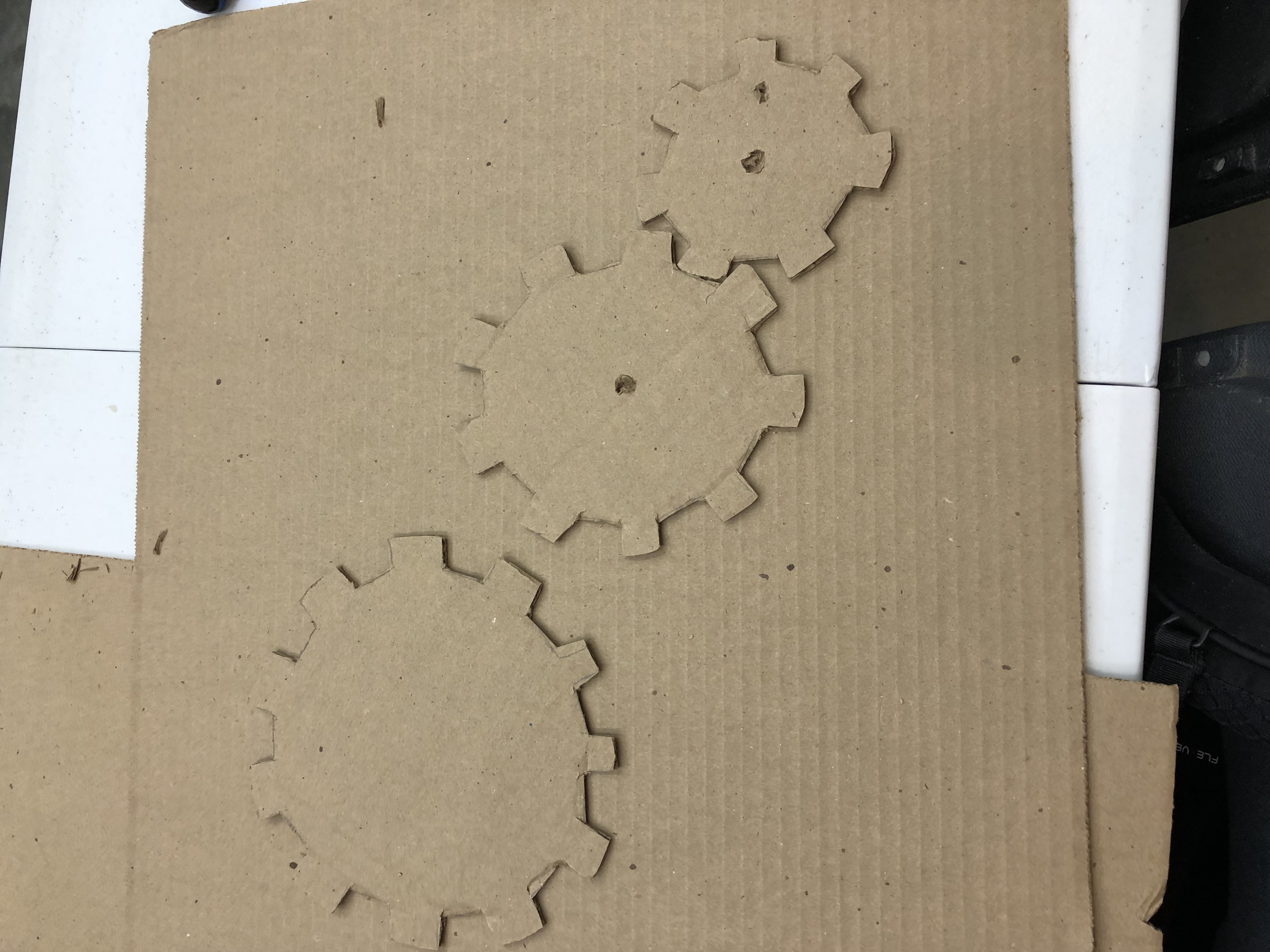

Drawing Machines: Resistance

When I first started to think of what I wanted do for my final conference project, something that really interested me was gears. I definitely wanted to incorporate gears into my project somehow. Whether that be the gears are part…

Art From Code: Portrait of the Self as Anything But

For conference, I was most inspired by Bret Victor’s call to stop drawing “dead fishes” –– a metaphor to suggest that computer art should not seek to replicate older mediums but rather take advantage of what is only possible in…

Art From Code: Tattoos From Code

This series encompasses works inspired from a variety of currently active tattoo artists. However, these artists all use traditional methods of creating art. Tattoo artists often create an image on paper before transferring it to skin. In this case, I…

Drawing Machines: Impossible Machine

This machine was meant to reflect the artist we researched. However, because I wasn’t researching an artist but a philosopher, I was given a level of freedom that my other classmates were not afforded. I started by thinking about the…

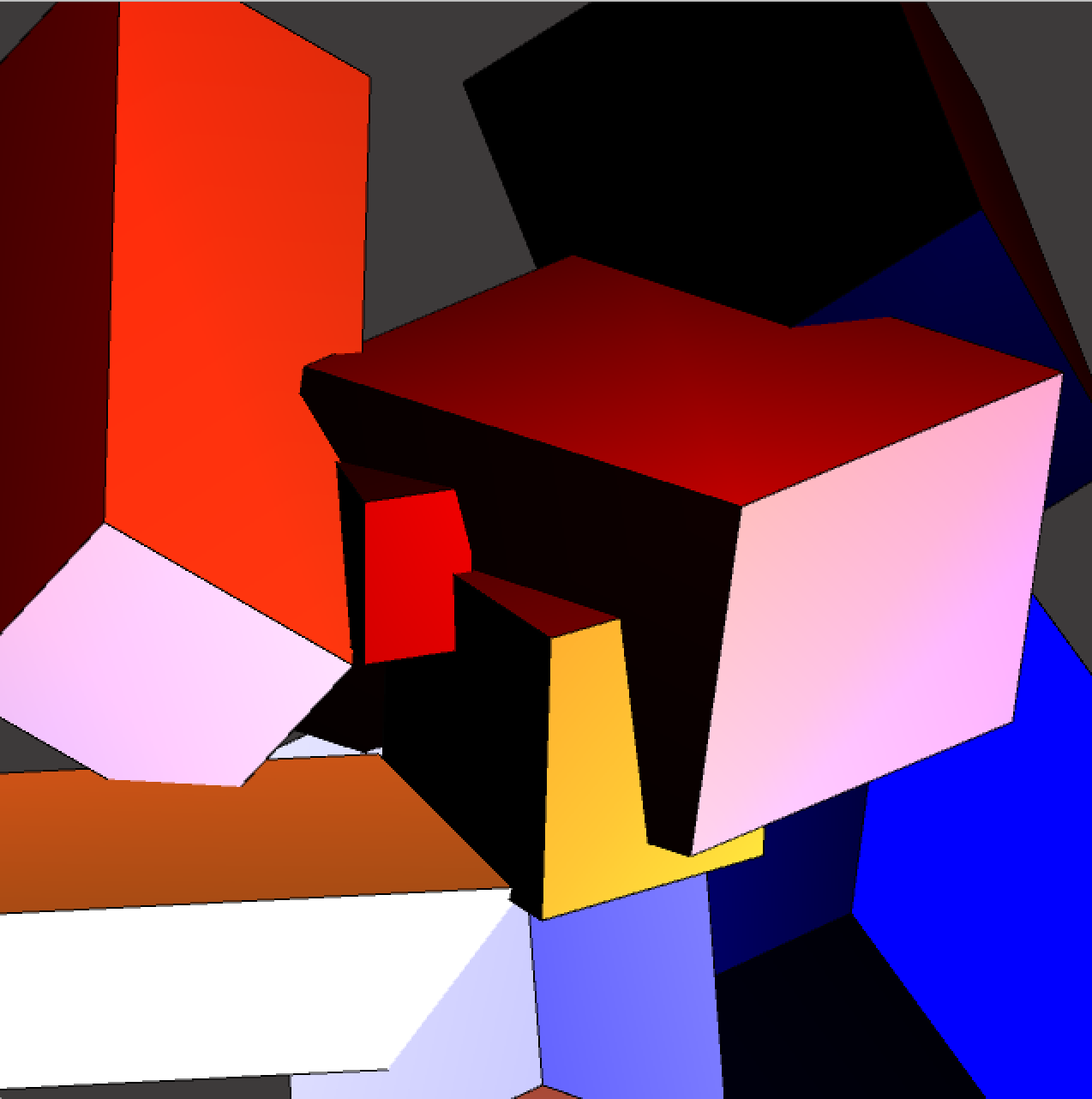

Art From Code: Getting Into It

I created a series of 3d works, which was inspired by the artist Piet Mondrian. Mondrian is known for being one of the pioneers of the 20th-century abstract art. He changed his artistic direction from figurative pairing to an…

Digital Tools for Artists: Cloudbirth

When I signed up to take this course, I knew that I ultimately wanted to learn digital art skills that could pair with the electronic music that has been my primary artistic practice for the last couple of years. Since…